Unlocking Data Insights: The Best Machine Learning Libraries for Python for Data Analysis

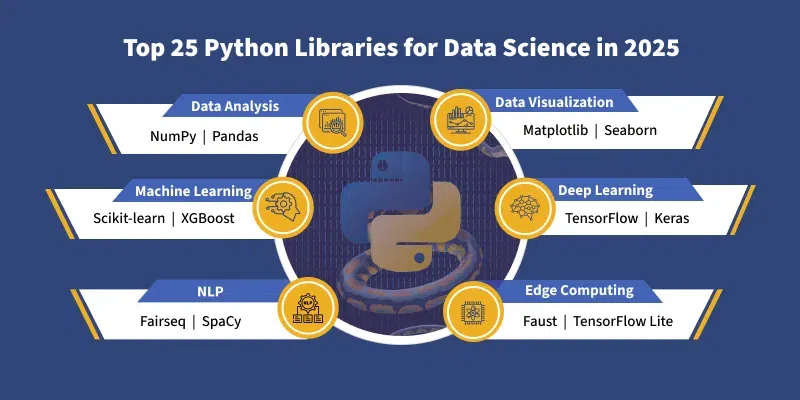

In the rapidly evolving landscape of data science and artificial intelligence, Python has cemented its position as the go-to programming language, largely due to its simplicity, versatility, and, most importantly, its robust ecosystem of powerful libraries. For any aspiring or seasoned data analyst, understanding and effectively utilizing the best machine learning libraries for Python for data analysis is paramount to extracting meaningful insights, building predictive models, and driving data-driven decisions. This comprehensive guide delves deep into the essential tools that empower data professionals to transform raw data into actionable intelligence, covering everything from fundamental data manipulation to advanced deep learning architectures. Prepare to discover the core components of a successful Python data analysis toolkit.

The Foundation: Essential Libraries for Data Manipulation and Numerical Computing

Before diving into complex machine learning algorithms, a solid understanding of Python's foundational libraries for data handling is crucial. These tools serve as the bedrock upon which all advanced data analysis workflows are built, enabling efficient data cleaning, transformation, and preparation.

Pandas: The Data Analysis Powerhouse

For anyone working with structured data, Pandas is an indispensable library. It introduces two primary data structures: the Series (a one-dimensional labeled array) and the DataFrame (a two-dimensional labeled data structure with columns of potentially different types). Pandas excels at tasks like:

- Data Loading and Saving: Seamlessly import data from various formats like CSV, Excel, SQL databases, and JSON.

- Data Cleaning and Preprocessing: Handle missing data, remove duplicates, filter rows, and perform complex transformations. This is critical for any predictive modeling task.

- Data Manipulation: Grouping, merging, joining, and reshaping datasets with intuitive syntax.

- Exploratory Data Analysis (EDA): Calculate descriptive statistics, identify outliers, and prepare data for visualization.

Practical Tip: Always begin your data analysis project by loading your dataset into a Pandas DataFrame. This provides a flexible and efficient structure for all subsequent operations, from initial inspection to feature engineering. Mastering Pandas is a non-negotiable step for effective data science tools utilization.

NumPy: The Numerical Computing Backbone

At the heart of many scientific computing and machine learning libraries lies NumPy (Numerical Python). It provides support for large, multi-dimensional arrays and matrices, along with a collection of high-level mathematical functions to operate on these arrays. While you might not directly interact with NumPy as much as Pandas for high-level data manipulation, its performance-optimized array operations underpin almost every numerical computation in Python's data ecosystem.

- Efficient Array Operations: Perform element-wise operations, linear algebra, Fourier transforms, and random number generation significantly faster than standard Python lists.

- Memory Efficiency: NumPy arrays consume less memory compared to Python lists, which is vital when dealing with large datasets.

- Foundation for Other Libraries: Pandas DataFrames are built on NumPy arrays, and most machine learning libraries expect NumPy arrays as input.

Expert Insight: Understanding basic NumPy array indexing and slicing can dramatically improve the efficiency of your data manipulation scripts, especially when preparing data for complex machine learning algorithms.

The Machine Learning Workhorse: Scikit-learn

When it comes to traditional machine learning, Scikit-learn stands out as the undisputed champion. It's a comprehensive library that provides a wide range of supervised and unsupervised learning algorithms, along with tools for model selection, preprocessing, and evaluation. Its consistent API design makes it incredibly user-friendly and efficient for rapid prototyping and deployment.

Comprehensive Algorithm Suite

Scikit-learn covers a vast array of machine learning tasks, making it a versatile tool for various data analysis challenges:

- Classification: Implement algorithms like Logistic Regression, Support Vector Machines (SVMs), Decision Trees, Random Forests, K-Nearest Neighbors (KNN), and Naive Bayes for tasks such as spam detection or image classification.

- Regression: Utilize Linear Regression, Ridge, Lasso, ElasticNet, Decision Tree Regressors, and Gradient Boosting Regressors for predicting continuous values like house prices or stock trends.

- Clustering: Perform unsupervised learning with K-Means, DBSCAN, Agglomerative Clustering to discover inherent groupings in data (e.g., customer segmentation).

- Dimensionality Reduction: Techniques like Principal Component Analysis (PCA) and t-SNE help in visualizing high-dimensional data and reducing noise, crucial for effective feature engineering.

Key Features for Robust Model Building

- Preprocessing: Tools for scaling features (MinMaxScaler, StandardScaler), encoding categorical variables (OneHotEncoder, LabelEncoder), and handling missing values.

- Model Selection and Evaluation: Cross-validation techniques (KFold, StratifiedKFold), hyperparameter tuning (GridSearchCV, RandomizedSearchCV), and metrics for evaluating model performance (accuracy, precision, recall, F1-score, ROC AUC, R-squared, MSE). These are vital for ensuring your predictive modeling is robust.

- Pipelines: Streamline your workflow by chaining multiple preprocessing steps and a final estimator into a single object, preventing data leakage and ensuring consistent transformations.

Actionable Advice: For any tabular data analysis task involving classification or regression, Scikit-learn should be your first port of call. Its extensive documentation and vast community support make learning and debugging incredibly efficient. It's truly a cornerstone for any machine learning algorithms practitioner.

Deep Learning Powerhouses: TensorFlow, Keras, and PyTorch

For more complex tasks involving large datasets, unstructured data (images, text, audio), and the need for sophisticated pattern recognition, deep learning frameworks are essential. These libraries enable the creation and training of neural networks, pushing the boundaries of what artificial intelligence can achieve.

TensorFlow: Google's End-to-End Platform

Developed by Google, TensorFlow is an open-source, end-to-end platform for machine learning. It provides a comprehensive ecosystem of tools, libraries, and community resources that lets researchers push the state-of-the-art in ML and developers easily build and deploy ML-powered applications. TensorFlow is particularly powerful for:

- Large-Scale Deployments: Excellent for production environments, capable of running on various platforms, from mobile devices to large-scale distributed systems.

- Custom Model Development: Offers a low-level API for intricate control over model architecture and training processes, favored by researchers exploring novel neural networks.

- TensorBoard: A powerful visualization tool for monitoring training metrics, visualizing graph computations, and debugging models.

Consideration: While incredibly powerful, TensorFlow's lower-level API can have a steeper learning curve for beginners compared to Scikit-learn or Keras, especially when dealing with complex deep learning frameworks.

Keras: High-Level API for Rapid Prototyping

Keras is a high-level API for building and training deep learning models, designed for fast experimentation. It runs on top of TensorFlow (and previously Theano or CNTK), abstracting away much of the complexity of the underlying framework. Keras is ideal for:

- Rapid Prototyping: Its user-friendly API allows for quick model definition and training with minimal code, perfect for iterating on ideas.

- Beginner-Friendly: Simpler syntax and fewer concepts to grasp, making it an excellent entry point into deep learning.

- Common Neural Network Architectures: Easily build Convolutional Neural Networks (CNNs) for image processing and Recurrent Neural Networks (RNNs) for sequential data like text.

Actionable Advice: If you're new to deep learning, start with Keras. It allows you to focus on the model architecture and problem-solving without getting bogged down in the intricacies of TensorFlow's graph operations. It's a fantastic tool for getting started with predictive modeling using neural nets.

PyTorch: Facebook's Dynamic Graph Framework

Developed by Facebook's AI Research lab, PyTorch has gained immense popularity, especially among researchers and those who prefer a more "Pythonic" approach to deep learning. Its key features include:

- Dynamic Computation Graphs: Unlike TensorFlow's static graphs (in earlier versions), PyTorch's dynamic graphs allow for more flexibility and easier debugging, as the graph is built on the fly.

- Pythonic Interface: Integrates seamlessly with Python's data structures and control flow, making it feel more intuitive for Python developers.

- Strong Community and Research Focus: Heavily adopted in academic research due to its flexibility and ease of experimentation with novel architectures.

Choosing between TensorFlow/Keras and PyTorch: For production deployment and large-scale enterprise solutions, TensorFlow (especially with Keras API) has a slight edge. For research, rapid experimentation, and a more interactive development experience, PyTorch is often preferred. Both are leading deep learning frameworks and continuously evolve.

Specialized Libraries for Enhanced Performance

Beyond the core ML libraries, several specialized tools offer significant performance improvements for specific types of tasks, particularly with tabular data.

XGBoost and LightGBM: Gradient Boosting Powerhouses

For structured, tabular data, gradient boosting machines (GBMs) often outperform traditional machine learning algorithms and even deep learning models in terms of accuracy and speed. XGBoost (eXtreme Gradient Boosting) and LightGBM (Light Gradient Boosting Machine) are highly optimized implementations of this algorithm.

- High Performance: Both libraries are designed for speed and efficiency, handling large datasets with impressive training times.

- Accuracy: Consistently achieve top results in machine learning competitions like Kaggle due to their robust handling of complex relationships in data.

- Feature Importance: Provide insights into which features contribute most to the model's predictions, aiding in feature engineering and interpretability.

When to Use: If you're working with datasets containing numerical and categorical features (e.g., customer data, sales records, financial data), XGBoost or LightGBM should be among your first choices for building high-performing predictive models. They are excellent additions to your suite of data science tools.

Visualization and Reporting: Matplotlib and Seaborn

While not strictly machine learning libraries, Matplotlib and Seaborn are indispensable for data analysis, offering powerful capabilities for visualizing data and model results. Effective data visualization is key to understanding your data, communicating insights, and evaluating model performance.

- Matplotlib: The foundational plotting library in Python, providing a highly customizable environment for creating static, interactive, and animated visualizations. It offers fine-grained control over every element of a plot.

- Seaborn: Built on top of Matplotlib, Seaborn provides a higher-level interface for drawing attractive and informative statistical graphics. It simplifies the creation of complex plots like heatmaps, pair plots, and violin plots, which are common in exploratory data analysis and model evaluation.

Best Practice: Use Seaborn for quick, aesthetically pleasing statistical plots and switch to Matplotlib for highly customized or niche visualizations. Visualizing your data at every step of the data analysis workflow is crucial for identifying patterns, validating assumptions, and presenting findings effectively.

Choosing the Right Library: A Strategic Approach

Selecting the optimal Python library for your data analysis task depends heavily on the nature of your data, the problem you're trying to solve, and your project's specific requirements. Here's a strategic approach:

- Start with Foundations: Always begin with Pandas for data loading, cleaning, and initial manipulation, supported by NumPy for underlying numerical operations.

- Traditional ML & Tabular Data: For most classification, regression, and clustering tasks on structured, tabular data, Scikit-learn is your primary choice. If performance is critical on such data, consider XGBoost or LightGBM.

- Deep Learning & Unstructured Data: For images, text, audio, or very large datasets requiring complex pattern recognition, dive into Keras (for ease of use and rapid prototyping) or PyTorch (for flexibility and research). TensorFlow provides the underlying power for Keras and is suitable for large-scale deployment.

- Visualization: Leverage Seaborn for quick statistical plots and Matplotlib for detailed customization to effectively communicate your data-driven insights.

Actionable Tip: Don't try to learn all libraries at once. Master Pandas and Scikit-learn first, as they cover a vast majority of common data analysis and machine learning tasks. Then, expand your toolkit as your projects demand more specialized capabilities or delve into deep learning.

Frequently Asked Questions

What is the most essential Python library for data analysis beginners?

For beginners in data analysis, Pandas is undeniably the most essential Python library to master. It provides robust and intuitive data structures like DataFrames, making it incredibly easy to load, clean, transform, and explore structured datasets. A strong grasp of Pandas lays the groundwork for all subsequent data manipulation and machine learning tasks, serving as the gateway to the broader ecosystem of data science tools.

How do Scikit-learn, TensorFlow, and PyTorch differ in their primary use cases?

Scikit-learn is primarily used for traditional machine learning algorithms (classification, regression, clustering) on structured, tabular data, known for its consistent API and ease of use. TensorFlow and PyTorch, on the other hand, are powerful deep learning frameworks specifically designed for building and training neural networks, excelling with unstructured data (images, text, audio) and large-scale, complex models. TensorFlow is often favored for production deployment due to its comprehensive ecosystem, while PyTorch is popular in research for its flexibility and Pythonic interface for dynamic neural networks.

Can I use multiple machine learning libraries together in a single project?

Absolutely! It's very common and often beneficial to combine multiple machine learning libraries for Python for data analysis in a single project. For instance, you might use Pandas for initial data cleaning and preprocessing, then feed the prepared data into a Scikit-learn model for traditional classification, or use Keras (built on TensorFlow) for a deep learning solution. You could then use Matplotlib or Seaborn to visualize the results. This modular approach leverages the strengths of each library, creating a more efficient and powerful data analysis workflow.

What are LSI keywords and why are they important for content like this?

LSI (Latent Semantic Indexing) keywords are conceptually related terms and phrases that Google's algorithms associate with a primary topic. They are not simply synonyms but rather words that frequently appear together in high-quality content about a specific subject. For an article on "best machine learning libraries for python for data analysis," LSI keywords like "data science tools," "predictive modeling," "deep learning frameworks," "statistical analysis," and "feature engineering" help search engines understand the broader context and depth of the content. Including them naturally throughout the article signals comprehensive coverage, improves topical authority, and enhances the chances of ranking for a wider range of relevant queries, ultimately optimizing for user search intent and providing more valuable data-driven insights.

0 Komentar