Revolutionizing Robotics: AI's Pivotal Role in Object Recognition and Navigation

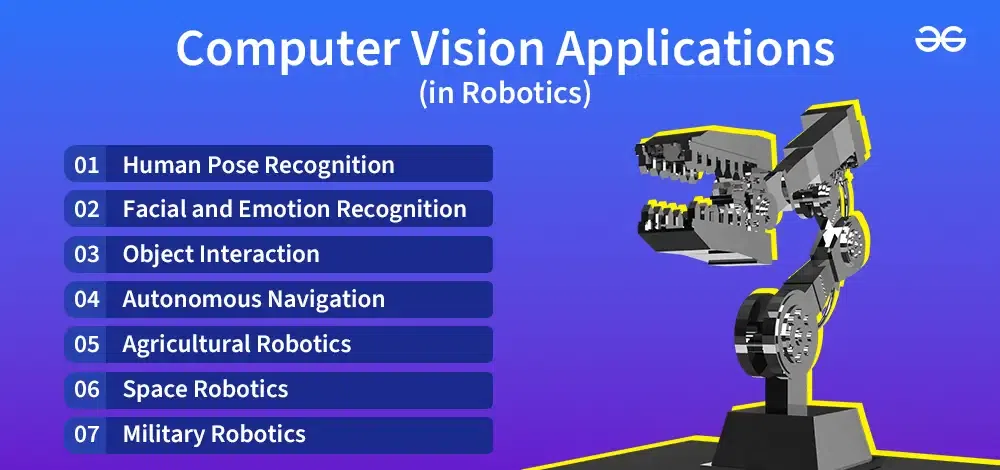

In the rapidly evolving landscape of automation, the synergy between artificial intelligence (AI) and robotics has become the bedrock for truly intelligent machines. At the heart of this transformation lies AI's unparalleled ability to empower robots with sophisticated object recognition and precise navigation capabilities. This comprehensive guide delves into how AI is revolutionizing the field, enabling robots to perceive, understand, and interact seamlessly with their surroundings. From advanced deep learning models to complex computer vision systems and sophisticated SLAM techniques, we explore the core technologies that allow autonomous robots to navigate complex environments, enhancing efficiency and safety across diverse industries.

The Symbiotic Relationship: AI and Robotic Intelligence

The journey towards fully autonomous robots hinges on their capacity to emulate human-like perception and decision-making. This is precisely where AI steps in, transforming rigid, pre-programmed machines into adaptive, learning entities. Without AI, robots would remain confined to highly structured, predictable environments, incapable of responding to unexpected changes or unstructured data. AI provides the cognitive framework, allowing robots to interpret sensory input, make informed decisions, and execute actions with unprecedented precision. This integration is not merely an enhancement; it is the fundamental shift that unlocks the true potential of robotics, driving innovation in everything from industrial automation to healthcare and exploration.

From Pre-programmed to Perceptive: The Evolution

- Rule-based limitations: Traditional robots operate on explicit rules, failing when conditions deviate even slightly.

- AI's adaptability: Machine learning algorithms enable robots to learn from data, generalize patterns, and adapt to dynamic situations.

- Cognitive robotics: AI bestows robots with the ability to "understand" their environment, not just react to it, leading to more robust and reliable performance.

Object Recognition: The "Eyes" and "Understanding" of Robotics

For a robot to effectively operate in any environment, it must first be able to "see" and "understand" the objects around it. This is the domain of object recognition, a critical application of AI, particularly computer vision systems. It involves identifying and locating objects within an image or video stream, classifying them, and often estimating their pose (position and orientation). This capability is indispensable for tasks ranging from picking and placing items in a warehouse to identifying surgical instruments in an operating room or detecting obstacles on an autonomous vehicle's path.

Core Technologies Powering Robotic Object Recognition

The advancements in deep learning have propelled object recognition capabilities far beyond traditional methods. Neural networks, especially Convolutional Neural Networks (CNNs), are at the forefront.

- Deep Learning Models (CNNs): These are the workhorses of modern object recognition. CNNs are specifically designed to process pixel data, automatically learning hierarchical features from raw images. They can detect edges, textures, shapes, and ultimately, entire objects. Popular architectures include YOLO (You Only Look Once), SSD (Single Shot MultiBox Detector), and Faster R-CNN, known for their speed and accuracy in real-time applications.

- Data Annotation and Training: The effectiveness of deep learning models heavily relies on vast amounts of meticulously labeled data. Humans annotate images, outlining objects and assigning labels (e.g., "cup," "chair," "person"). This supervised learning process teaches the neural network to associate visual patterns with specific object classes.

- Feature Extraction and Matching: Beyond deep learning, traditional methods like SIFT (Scale-Invariant Feature Transform) and SURF (Speeded Up Robust Features) are still used, particularly for specific object tracking or landmark recognition. These algorithms extract unique points of interest from images, which can then be matched across different views to identify objects or track their movement.

- 3D Perception: For robots operating in the physical world, 2D image recognition is often insufficient. 3D object recognition, utilizing depth cameras (like Intel RealSense or Microsoft Kinect) or LiDAR, provides crucial spatial information. Point cloud processing and 3D reconstruction algorithms enable robots to understand an object's true shape and volume, vital for manipulation tasks.

Challenges in Robust Object Recognition

Despite significant progress, achieving universally robust object recognition remains challenging:

- Varying Lighting Conditions: Changes in illumination can drastically alter an object's appearance.

- Occlusion: Objects being partially hidden by others.

- Pose Variation: An object appearing differently from various angles.

- Clutter: Dense environments with many overlapping objects.

- Novelty Detection: Identifying objects not seen during training.

- Real-time Performance: Balancing accuracy with the speed required for dynamic robotic operations.

Navigation: The "Brain" and "Movement" of Robotics

Once a robot can "see" and "understand" its environment through object recognition, the next crucial step is to move intelligently within it. This is the realm of robotic navigation, a complex interplay of perception, localization, mapping, and path planning. AI-driven navigation allows robots to move autonomously from one point to another, avoiding obstacles, optimizing routes, and adapting to dynamic changes in their surroundings.

Pillars of AI-Powered Robotic Navigation

Autonomous navigation relies on several integrated AI and algorithmic components:

- Simultaneous Localization and Mapping (SLAM): This is arguably the most fundamental challenge in autonomous navigation. A robot needs to know where it is (localization) and simultaneously build a map of its unknown environment (mapping). SLAM algorithms, often employing probabilistic methods like Extended Kalman Filters (EKF), Particle Filters, or graph-based optimization, allow robots to achieve this. Visual SLAM uses cameras, while LiDAR SLAM uses laser scanners, often combined for greater robustness. Learn more about SLAM techniques.

- Path Planning Algorithms: Once localized within a map, the robot needs to determine an optimal path to its goal. AI-driven path planning considers factors like shortest distance, energy efficiency, smoothness of movement, and obstacle avoidance. Algorithms such as A (A-star), RRT (Rapidly-exploring Random Tree), and their variants are commonly used. These algorithms can generate global paths (pre-computed based on the full map) and local paths (real-time adjustments based on immediate sensor readings).

- Sensor Fusion Techniques: Robots typically employ multiple types of sensors – cameras, LiDAR, ultrasonic sensors, IMUs (Inertial Measurement Units), GPS, etc. No single sensor provides a complete picture, and each has its limitations. Sensor fusion techniques, often leveraging Kalman filters or particle filters, integrate data from these diverse sensors to create a more accurate and robust understanding of the robot's state and environment. This multi-modal data processing significantly enhances reliability, especially in challenging conditions.

- Obstacle Avoidance: This is a real-time safety critical function. While path planning sets a general route, obstacle avoidance ensures the robot dynamically reacts to unexpected static or moving objects. This often involves local perception, using proximity sensors or real-time object recognition, to make immediate evasive maneuvers. AI models can learn to predict the movement of dynamic obstacles (e.g., people, other robots) and plan accordingly.

- Environmental Perception: This overarching concept integrates all sensory data to build a comprehensive understanding of the robot's surroundings. It includes not just detecting objects but also identifying traversable areas, understanding semantic information (e.g., "this is a door," "this is a wall"), and even predicting future states of dynamic elements.

The Synergy: How Object Recognition Fuels Navigation

It's crucial to understand that object recognition and navigation are not isolated functions; they are deeply intertwined, with AI serving as the connective tissue. Effective navigation fundamentally relies on accurate object recognition. For instance:

- Semantic Mapping: Object recognition allows robots to build "semantic maps," not just geometric ones. Instead of just knowing "there's an obstacle here," the robot knows "there's a chair here," or "this is a workstation." This semantic understanding enables more intelligent path planning and decision-making.

- Goal-Oriented Navigation: If a robot's goal is to "find and pick up the red box," object recognition identifies the red box, and navigation plans the route to it. Without accurate recognition, the navigation system wouldn't know its target.

- Dynamic Obstacle Avoidance: Real-time object recognition is vital for identifying moving obstacles (people, forklifts) and predicting their trajectories, allowing the navigation system to plan safe evasive actions.

- Localization with Landmarks: Robots can use recognized objects as landmarks to improve their localization accuracy within a mapped environment, especially in GPS-denied areas.

- Interaction Planning: For collaborative robots, object recognition helps identify tools, workpieces, and even human co-workers, enabling safe and efficient interaction planning.

Real-World Applications and Actionable Insights

The impact of AI in robotics for object recognition and navigation is profound and far-reaching, transforming industries and creating new possibilities for automation.

Industry Use Cases

- Logistics and Warehousing: Autonomous Mobile Robots (AMRs) use AI for precise navigation through complex warehouse layouts, recognizing shelves, pallets, and avoiding human workers. This enhances efficiency in order fulfillment and inventory management.

- Healthcare: Surgical robots employ advanced computer vision systems for precise instrument tracking and tissue recognition, while hospital delivery robots navigate corridors, avoiding patients and staff.

- Automotive (Self-Driving Cars): A prime example, autonomous vehicles rely heavily on AI for perceiving road signs, traffic lights, pedestrians, other vehicles, and navigating diverse road conditions.

- Manufacturing: Collaborative robots (cobots) utilize AI to recognize workpieces, tools, and human operators, enabling safe and efficient collaboration on assembly lines.

- Agriculture: AI-powered agricultural robots can identify crops, weeds, and pests, navigating fields to apply treatments precisely, reducing waste.

- Exploration and Inspection: Drones and subsea robots use AI for mapping unknown terrains, identifying geological features, or inspecting infrastructure for defects.

Actionable Tips for Developers and Integrators

For those looking to implement or develop AI-driven robotic solutions, consider these practical recommendations:

- Data is King: Invest heavily in acquiring and meticulously labeling diverse datasets for object recognition. The quality and quantity of your training data directly correlate with model performance. Consider data augmentation strategies to enhance dataset variety.

- Choose the Right Sensors: Select sensors (LiDAR, cameras, radar, ultrasonic) based on your environment's specific challenges (lighting, dust, range, cost). Often, a combination of sensors with robust sensor fusion techniques yields the best results.

- Prioritize Real-time Performance: For dynamic environments, low latency in perception and decision-making is critical. Optimize your AI models for inference speed and consider edge computing solutions to process data closer to the source.

- Robust Error Handling: Robots will encounter novel situations. Implement robust error detection, fallback mechanisms, and graceful degradation strategies to ensure safety and continuous operation.

- Simulation for Development and Testing: Utilize robotic simulation environments (e.g., Gazebo, Unity, Isaac Sim) to rapidly prototype, test, and refine AI models and navigation algorithms in a safe and cost-effective manner before deployment in the physical world.

- Ethical AI Considerations: As robots become more autonomous, address biases in data, ensure transparency in decision-making, and prioritize safety and privacy in design.

Future Trends and Emerging Horizons

The field of AI in robotics is continuously evolving. Expect to see further advancements in:

- Reinforcement Learning for Navigation: Training robots to learn optimal navigation policies through trial and error, particularly in complex, unknown environments.

- Generative AI for Data Synthesis: Using AI to create synthetic training data, reducing the reliance on costly manual annotation.

- Explainable AI (XAI): Developing AI models whose decisions can be understood by humans, crucial for debugging and trust, especially in safety-critical applications.

- Human-Robot Collaboration: More sophisticated AI for understanding human intent and gestures, leading to seamless collaboration in shared workspaces.

- Edge AI and On-device Processing: Bringing powerful AI computations closer to the robot, enabling faster decision-making and reducing reliance on cloud connectivity.

Frequently Asked Questions

What is the primary role of AI in robotic object recognition?

The primary role of AI in robotic object recognition is to enable robots to perceive, identify, and categorize objects in their environment, much like humans do. This is predominantly achieved through deep learning models, especially Convolutional Neural Networks (CNNs), which are trained on vast datasets to learn complex visual patterns. AI allows robots to go beyond simple shape detection to understand the semantic meaning of objects, enabling more intelligent interaction and decision-making in tasks like grasping, sorting, or avoiding specific items. It provides the "eyes" and the "understanding" for robotic perception.

How do robots use AI for autonomous navigation?

Robots use AI for autonomous navigation by integrating several key capabilities: Simultaneous Localization and Mapping (SLAM), which allows the robot to build a map of its surroundings while simultaneously figuring out its own position within that map; path planning strategies, where AI algorithms determine the most efficient and safe route to a destination; and real-time obstacle avoidance, utilizing sensor data to react to dynamic changes. AI processes data from various sensors (LiDAR, cameras, ultrasonic) using sensor fusion techniques to create a robust environmental model, allowing the robot to move intelligently and adaptively through complex and changing environments.

What are the main challenges for AI in robotics object recognition and navigation?

Several significant challenges persist for AI in robotic object recognition and navigation. For object recognition, challenges include varying lighting conditions, partial object occlusion, objects appearing from different angles (pose variation), crowded scenes, and the need to identify objects not seen during training. For navigation, challenges involve operating in dynamic environments with unpredictable elements, achieving robust performance in GPS-denied or feature-poor areas, ensuring real-time responsiveness for safety-critical operations, and the computational demands of complex data processing capabilities. Overcoming these often requires sophisticated algorithms, extensive data, and robust hardware.

Can AI-powered robots adapt to new environments without reprogramming?

Yes, one of the significant advantages of AI-powered robots, particularly those leveraging machine learning algorithms and reinforcement learning, is their enhanced ability to adapt to new environments without requiring extensive reprogramming. Unlike traditional rule-based systems, AI allows robots to learn from experience and generalize patterns. For instance, a robot trained in one warehouse environment can adapt to a new layout by continuously updating its internal map and refining its navigation strategies through ongoing interaction with the new space. This adaptability is crucial for deploying robots in diverse and dynamic real-world settings, reducing deployment costs and increasing versatility.

0 Komentar